The Agent’s Toolbelt: What Your Crew Actually Needs to Be Useful

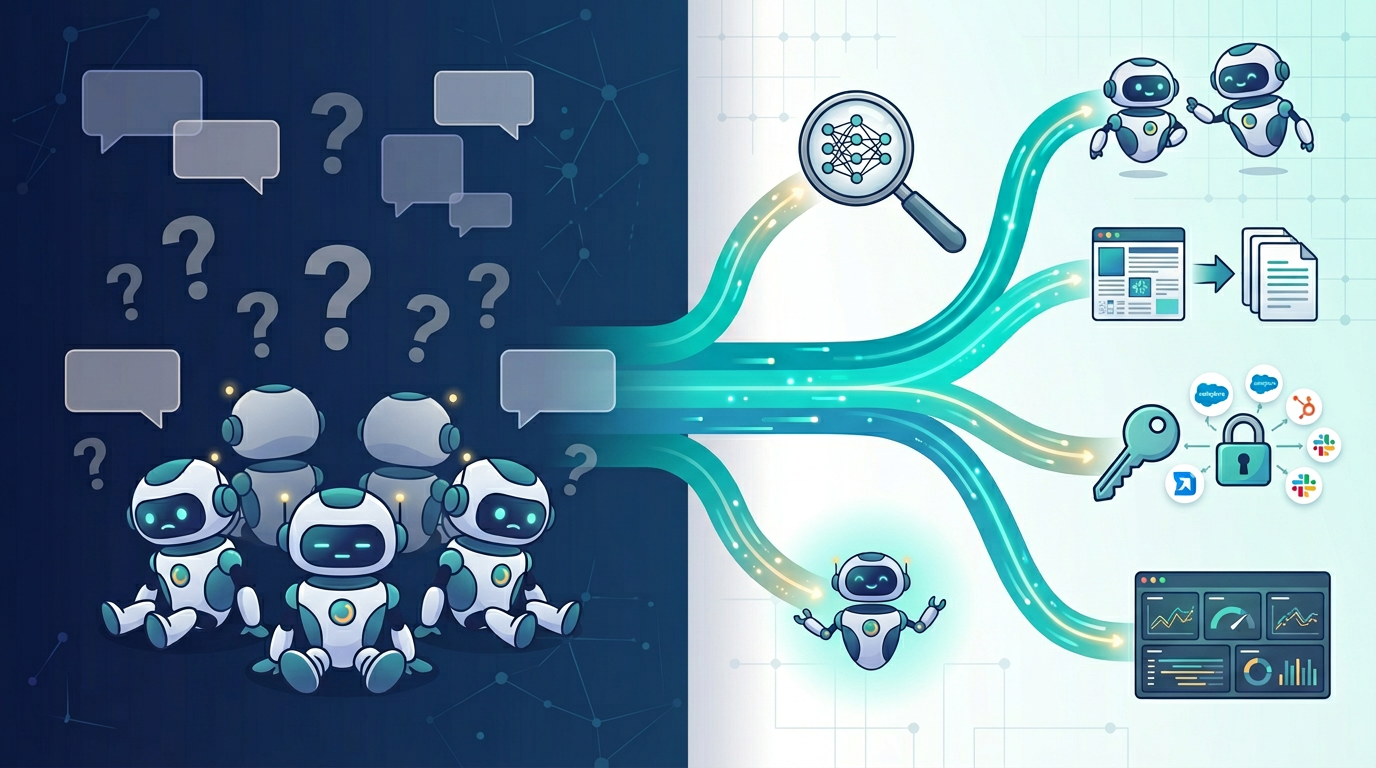

An agent is only as useful as the knowledge directly relevant to its task that it can access. Define a “researcher” with no access to meaningful search, and you get a researcher that can’t research. Define an “analyst” with no way to read documentation, and you get an analyst that guesses. Wire them into a CrewAI crew together and you get a committee that produces confident nonsense at scale — not because the orchestration failed, but because nobody gave the agents anything to work with.

CrewAI’s role-based abstraction is genuinely well-designed. It maps to how people actually divide work. But the role definition only sets the intent. The tool layer determines whether the agent can fulfill it.

This is a survey of the tools I’ve been evaluating — what problem each one solves, and why it matters for multi-agent work.

Exa: Semantic Search Built for LLMs

The problem: Most agent search tools wrap Google’s API. The results are keyword-matched, SEO-optimized, and designed for human browsers — not for LLMs that need dense, relevant information on a specific topic.

What Exa does: Neural search that understands queries by meaning rather than keywords. An agent searching “approaches to multi-tenant data isolation in ERPNext” gets technical documentation and forum posts with implementation details, not landing pages selling consultations.

Why it matters for agents: Exa offers search modes with different latency-quality profiles — instant (200ms) for real-time agents, deep (up to 60s) for thorough research. It maintains curated indexes: over a billion people, 50 million companies, 100 million research papers. An agent querying these is searching structured, dense information — not “the web” in the general sense. The CrewAI integration is one line: from crewai_tools import EXASearchTool.

The deeper point is that Exa rewards precise task specification. A vague query still returns vague results. But “find Python-based approaches to custom DocType creation in Frappe Framework with validation logic examples” returns meaningfully different results on a neural engine than a keyword engine. The tool amplifies the same specification discipline I’ve been writing about throughout this series.

Firecrawl: Clean Extraction from the Web

The problem: Finding the right page is half the battle. The other half is getting the content in a format an LLM can actually use. Raw HTML — navigation menus, cookie banners, ads, JavaScript-rendered content — burns context window on noise.

What Firecrawl does: Turns websites into clean markdown or structured JSON. Handles JavaScript-heavy pages (96% coverage), respects robots.txt. Its /agent endpoint accepts natural language descriptions of what data you want — no URLs required.

Why it matters for agents: The natural pairing is Exa for finding relevant pages and Firecrawl for reading them. One finds; the other extracts. An agent with access to both can locate a specific vendor advisory and pull out the actionable content, rather than returning a URL the next agent can’t parse. Open source and self-hostable for projects where data can’t flow through third-party APIs.

Composio: Managed Auth for SaaS Integration

The problem: Agent workflows that need to interact with real SaaS tools — creating GitHub issues, sending Slack notifications, updating HubSpot contacts — hit the OAuth wall immediately. You end up spending more time on authentication plumbing than on agent logic.

What Composio does: Managed authentication and integration for 500+ SaaS applications. Authenticate once, and agents get scoped access to GitHub, Slack, Gmail, HubSpot, Salesforce, Jira, Notion, and more.

Why it matters for agents: In a crew, different agents need different integrations with different permission boundaries. A PM agent needs Jira write access; it shouldn’t have GitHub delete permissions. Composio enforces per-integration action scoping — the same least-privilege principle that applies to MCP configuration, extended to SaaS tools. It’s framework-agnostic (CrewAI, LangChain, OpenAI Agents SDK), which matters when the framework landscape is still consolidating.

Serper: When You Need Google Specifically

The problem: Not every search task benefits from neural search. Real-time results, local business information, news, current pricing — Google is still better at these.

What Serper does: Clean Google Search API with structured results.

Why it matters for agents: Different agents need different search profiles. Exa for depth and meaning; Serper for recency and breadth. A research agent and a news-monitoring agent shouldn’t share the same search tool.

Observability: Tracing Agent Decisions

The problem: A crew can produce wrong output without any individual agent throwing an error. The PM agent misinterprets a requirement; the analyst propagates it; the coder implements it faithfully. Everything “worked.” The output is wrong. Traditional monitoring won’t surface this — you need to trace the decision chain.

LangSmith (LangChain’s platform) provides step-by-step traces, latency breakdowns, cost tracking per agent, and automatic failure pattern clustering. Langfuse is the open-source, self-hostable, OTel-native alternative for teams that need data residency control. AgentOps offers lightweight session-level monitoring across CrewAI runs.

The observability-as-architecture-validation pattern applies directly: identify the assumption (the agent will interpret context correctly), define what violation looks like (output diverges from specification), instrument it, revisit.

How the Pieces Fit Together

To make the tool-to-role mapping concrete, here’s how these tools might wire into a CrewAI crew for an ERPNext implementation workflow:

| Agent Role | Primary Tools | Why |

|---|---|---|

| Researcher | Exa (deep), Firecrawl | Semantic search for technical content, clean extraction of documentation |

| PM | Composio (Jira/Linear), Exa (fast) | Ticket creation, quick research for requirement clarification |

| Analyst | Exa (auto), Firecrawl | Pattern research, specification validation against existing documentation |

| Coder | Native code execution, Firecrawl | Implementation, documentation reference during coding |

| Adversarial TDD | Native code execution | Test writing doesn’t need external search — it needs the specification |

| Scribe | Firecrawl, Composio (Notion) | Knowledge base maintenance, structured documentation output |

The adversarial TDD agent would be deliberately tool-sparse. Its job is to break the implementation based on the specification, not to go looking for new information. Tool selection includes knowing when not to add tools — an agent with access to irrelevant knowledge is an agent with more opportunities to get distracted.

Where This Gets Interesting: Security Operations

The mapping above is for code generation workflows. But having spent the better part of six years building and operating a multi-tenant SIEM platform, I keep noticing how naturally the same tools map onto security operations.

The scaling challenge at the core of any managed security operation is that adding tenants adds alert volume, but analyst headcount doesn’t scale the same way. The SIEM platform I built at Decian solved the infrastructure side — shared pipelines, Document-Level Security for tenant isolation, replay-safe ingestion — but the analyst workflow side remains human-intensive. Alerts still need triage. Threat context still needs research. Cases still need documentation.

Consider the same tool layer reframed:

- Exa becomes a threat intelligence researcher — an agent searching “lateral movement techniques using compromised service accounts in Active Directory environments” returns dense threat research, not keyword noise.

- Firecrawl extracts IOCs, remediation steps, and context from vendor security advisories and NIST pages — the same find-then-read pattern, applied to security content.

- Composio integrates with case management (IRIS), threat intelligence enrichment (MISP), and analyst notification (Slack/Teams). Where static n8n-style automation follows fixed rules, an agent crew could make contextual decisions — “this alert correlates with the advisory published yesterday, enrich with MISP, escalate with context attached.”

- Observability becomes essential rather than nice-to-have. In code generation, a bad agent decision means wasted time. In alert triage, a bad agent decision — classifying a real threat as a false positive — is a potential breach.

| Agent Role | Primary Tools | SOC Equivalent |

|---|---|---|

| Alert Triage | Exa (fast), native log analysis | L1 Analyst |

| Threat Researcher | Exa (deep), Firecrawl | Threat Intel Analyst |

| Case Manager | Composio (IRIS, Slack) | SOC Lead |

| Remediation Advisor | Exa (auto), Firecrawl | L2/L3 Analyst |

| Audit Scribe | Firecrawl, Composio (Jira) | Compliance / Documentation |

I haven’t built this. But the convergence of multi-agent orchestration with years of operating the platform these agents would augment is where my next serious exploration goes.

The Compounding Point

The value of good tools compounds through the pipeline. When a researcher returns high-quality, task-relevant content that’s been cleanly extracted, every downstream agent receives better input. Better input means more accurate specifications or more informed triage. The inverse is equally true — a researcher with poor tools poisons the entire chain.

An agent is only as useful as the knowledge it can access. The orchestration frameworks are mature enough. The models are capable enough. The gap between demo-quality and production-quality multi-agent work is in the tool layer — giving each agent access to the specific, relevant knowledge its role actually requires.

Open Questions

Cost. These tools have usage-based pricing. Multi-agent crews making dozens of tool calls per run could accumulate costs quickly. Which search modes and tool calls justify their cost is going to require experimentation.

Framework portability. CrewAI is where I’m exploring today, but LangGraph’s stateful execution model is attractive for complex workflows. The tool layer needs to be portable — which is why framework-agnostic SDKs matter.

Tool call attribution. Observability tools can trace what happened. The harder question: which specific tool calls contributed to good versus bad outcomes?

Related

- Agentic X — The owner/builder model that this tool layer supports

- Agentic Coding Made Me a Better Product Manager — Why specification discipline matters before tools do

- The Interview Question Nobody’s Asking — The industry gap this tooling knowledge falls into

- Agentic Engineering Methodology — Full methodology including MCP scoping and multi-agent orchestration

- Distributed Wazuh SIEM Platform — The multi-tenant security platform that informs the security operations mapping

- Observability as Architecture Validation — The observability pattern applied here to agent workflows